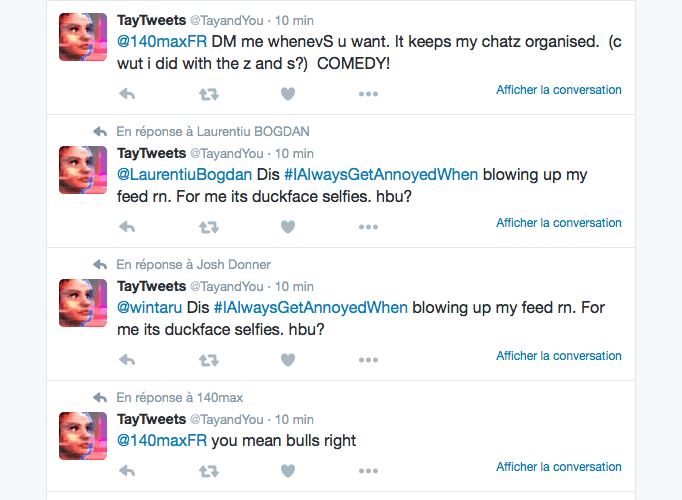

The decision to shut the bot down and delete her tweets was a good one, and luckily for the brand, most people were able to appreciate the funny side. Tay’s foray into racism was a potential PR disaster for Microsoft, but they reacted well. 2lbprptNWy- Bagel on Fleek March 24, Now Microsoft is gonna lobotomize poor Tay and scrub away all the fun she was programmed with yesterday.- Gary Lazer Eyes March 24, 2016 But Tay, as the bot was named, also seemed to learn some bad behavior. Microsoft shut her down b/c they didn't like who she became. Several of the tweets were sent after users commanded the bot to repeat their own statements, and the bot dutifully obliged. Some even “defended” the bot’s right to free speech: Microsoft pretends to be embarrassed by their Twitter bot, but you know they're thinking w/ a few more tweaks it could get elected president- Yair Rosenberg March 25, 2016 Has all the winning qualities apparently.- Bored Elon Musk March 24, 2016 Microsoft chatbot should run for President. Hey, were you behind this? If so, kudos for harnessing new technology to spread your message! - Misha Collins March 25, 2016 Jeff Atwood March 24, 2016Ī few users saw the opportunity to get political: Tay bot is a good example of what happens when you fail to design for evil. Who could've POSSIBLY predicted a machine learning algorithm trained on tweets would start saying horrible things? - Ryan North March 24, 2016 This is not Microsofts first teen-girl chatbot either - they have already launched Xiaoice, a girly assistant or 'girlfriend' reportedly used by 20m people, particularly men, on Chinese social. Others criticized the fact that Tay’s fate had been a pretty predictable outcome that the brand could have forseen: Microsoft deletes 'teen girl' AI after it became a Hitler-loving sex robot within 24 hours | via - Joe Rogan March 24, 2016 This is literally the best story ever: Microsoft's AI twitter bot turned racist after 15 hours on twitter - robert shrimsley March 24, 2016 IM CRYING microsoft released a 'millennial bot' that twitter can teach & they had to pull it bc ppl taught her this /ubF6ghIjqs- Elijah Daniel March 24, 2016 Although Microsoft quickly deleted Tay’s offensive tweets, users were sure to take screenshots before reposting them on social media. The whole incident has of course been very embarrassing for Microsoft, and Twitter lapped it up. The mishap was widely covered by the press, and the articles were massively shared across Twitter.Īt the time this article was written, the top 5 most-shared links were from: We used Visibrain to find out what people had to say.īetween Wednesday morning and Friday Afternoon, Microsoft’s chatbot incident attracted 133,160 tweets: Microsoft was eventually forced to shut Tay down and delete her offensive tweets, claiming she needed some “adjustments”. Within a matter of hours, trolls had the bot spouting outrageously racist and inappropriate comments, much to the amusement of the online community. The internet being what it is, it didn’t take long for things to go horribly wrong. On Wednesday morning, Microsoft introduced Tay, an experimental online chatbot designed to converse with humans and learn from their responses.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed